Articles

Quick start guide for testing Wi-Fi router performance

Will your devices perform in the long-term when dealing with user behavior?

The maximum throughput performance of a home gateway tends to gain the most attention from tech media. Since hitting that number often takes precedent, service providers and manufacturers often miss out on the true performance of a device over time, and how a device's robustness affects the end-user experience. In particular, the expansion of the number of devices connected to the gateway can have a significant impact on these metrics.

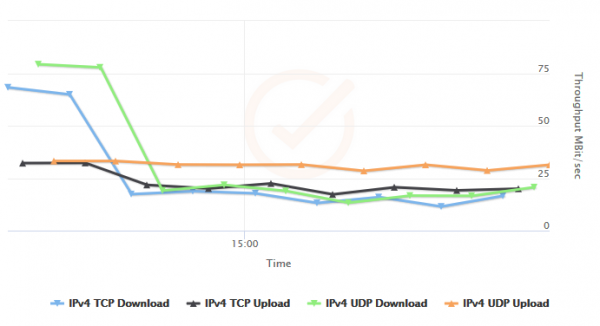

Here are some results from our own testing. This device had impressive, line-rate throughput when it was first tested. As the device had clients added and ran through feature testing like DNS proxy and DHCP, we saw a significant drop in throughput.

The only thing that would get this device out of this state was to reboot it - something that significantly impacts user experience.

What can cause behavior like this? In CDRouter Performance, we stress the importance of running performance testing alongside feature and protocol testing. A device exercising its real functions may have memory leaks, memory fragmentation, or other bugs that can degrade performance over time, especially as more and more hosts are using the router.

With the addition of multi-client performance testing in CDRouter, there's a clear path to take when testing a new device to see how it will perform after utilization of its features over time, and how it will handle the numerous and demanding devices employed by end-users.

What tests should I run to get a better look at device quality?

If you're testing a new device or one that has never been qualified with CDRouter, here is a helpful test package and test sequence to elicit possible performance issues.

The first steps involve building a test package. You can read more about how to do that in our quick start guide for CDRouter.

1. Add the CDRouter top 100 protocol and feature tests

First, we want to build a test package for this device. A typical home gateway has some common features that we have collected into our list of top 100 test cases, a great benchmark for testing. It has the added benefit of exercising some of the core functionality of a device, including NAPT, DHCP, firewall features, and more.

If the device supports IPv6, there is a top 100 IPv6 test list. If the device supports IPv4 and IPv6, add both of these top 100 tests during this step.

2. Add single-client throughput tests

As part of the same package as the top 100 tests, add tests perf_1, perf_2, perf_3 and perf_4 included in CDRouter Performance. These are throughput tests over TCP and UDP, respectively. Equivalent tests exist for IPv6 (ipv6_perf_1, ipv6_perf_2, ipv6_perf_3, ipv6_perf_4) as well.

Testers can control the duration of a performance test with the perfDuration testvar. A value of 15-30 seconds is usually good. When we loop the test (below), we will have many throughput data points to curate our results.

3. Add Wi-Fi stress tests

The wifi.tcl module contains several tests made to push your Wi-Fi implementation. It includes client restarts and association/deassociation of connections that, when repeated, can illustrate problems at this layer or affect throughput over time.

4. Run the package and loop indefinitely

Once we've added the top 100, throughput, and Wi-Fi test cases to a test package, we run the package and set it to loop indefinitely. Since we don't necessarily know how long it will take for a device to exhibit problems, we want to be able to stop it when we're ready.

The key here is doing long-duration testing to catch these issues. CDRouter is well suited to this since we can set up test packages to run overnight or over several days to see just how robust our device is, and which feature behavior ends up triggering performance issues.

5. Simulate 16 clients

Once we have baseline throughput results, it's time to test the router's behavior when multiple end-user devices are connected. CDRouter Multiport allows for the simulation of multiple clients, on multiple interfaces, during any CDRouter testing.

These simulated clients are brought online during CDRouter's startup procedure, which makes them present for whatever testing you are doing! We want to exercise the top 100 test cases once again with multiple clients connected (and cycling the tests through each client) in addition to multi-client throughput testing.

6. Add multi-client throughput and run again

Now that your device has handled some additional protocol level behavior, we can run multiple throughput streams terminated by our simulated hosts. This more intense performance testing begins to maximize the capabilities of the device you're testing. These types of tests can be run by adding perf_multi_1, perf_multi_2, perf_multi_3, and perf_multi_4 to your test package.

7. Repeat with 32 clients

How did your device perform with 16 clients? If it's still going strong, try increasing the number of simulated clients to 32. Then, re-run the top 100 tests once again, followed by throughput testing sourced to all 32 hosts. This testing is likely to reveal issues with your device's network processing that can arise when it is trying to handle today's user network demands!

More experiments and more questions

These steps are great for getting a good idea of how robust your solution is and how it will perform over time. There are many more permutations to try! Will the behavior be different if run over wired, rather than wireless, connections? Will the device perform the same way with different firmware builds? Automation is the key to getting in as many different scenarios as possible!